Meet your agents

AI agents that understand your business. Enterprise work that gets done.

AI Agents built for enterprise

Coherent experience across workflows and systems, with trusted data and persistent context.

Business-aware language understanding

Industry-trained NLP models detect intent, extract entities, and maintain context across complex enterprise conversations and support multilingual interactions across channels and regions, outperforming generic LLM-only systems on structured enterprise data.

Agentic AI grounded in your data

Use Azure OpenAI, Anthropic Claude, or Druid’s private LLM Becus, combined with Retrieval-Augmented Generation (RAG) so answers stay factual, sourceable and governed. No hallucinations. No guessing.

Keep context accross channels, and sessions

Agents maintain full conversation history so users can ask follow-up questions, change direction, or expand a request without repeating information.

Turn intent into execution inside one auditable experience

0%+

enterprise response accuracy

0%+

workflow automation across service operations

0+

enterprise integrations available out of the box

0x

faster agent time-to-value

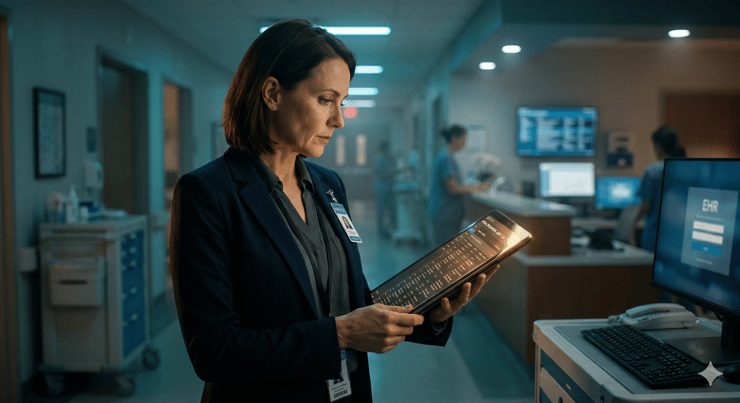

Live AI guidance for human teams

Live Assist surfaces grounded answers, next-best actions, and automates wrap-up work before and after the interaction.

Guidance in the moment

Surface response suggestions and recommended workflows while the interaction is still happening.

Grounded retrieval with citations

Give human agents the same trusted policy and knowledge access as AI agents, backed by source citations.

Automated wrap-up and QA visibility

Generate summaries and CRM or case updates automatically while giving supervisors live coaching and QA visibility.

Built to fit the enterprise, not work around it

Choose models, connect systems, and enforce controls without sacrificing deployment speed or operational visibility.

Model flexibility

Use Azure OpenAI, Anthropic Claude, or Druid's private LLM Becus while keeping governance and orchestration consistent.

Conductor orchestration

Orchestrate knowledge retrieval, decision logic, and system actions with Druid Conductor so multi-step work runs reliably across channels and teams.

Traceability and policy control

Review, govern and audit every response, retrieval step, and workflow to meet enterprise requirements.

Frequently asked questions

Get answers to the most common questions about Druid's AI agents and their capabilities before your demo.

What are Druid AI Agents?

Druid AI Agents are enterprise-grade AI employees that understand your business context, connect to your systems, and execute real work across workflows and channels, completing end-to-end multi step business processes with little to zero human intervention.

What NLP architecture powers Druid AI Agents?

Druid uses industry-trained NLP models that perform intent detection, named-entity extraction, and business context across enterprise conversations. These models are fine-tuned on structured enterprise data and outperform generic LLM-only pipelines on domain-specific accuracy and enterprise context.

What LLM backends are supported and how are they abstracted?

You can bring your own LLM like Azure OpenAI, Anthropic Claude, Druid’s private LLM Becus and more. The Conductor orchestration layer abstracts model selection from agent logic, so you can swap or A/B test models or even use multiple LLM to address a specific business process, without modifying conversation flows, integrations, or governance rules.

How does Druid’s multi-agent architecture compare to single-task AI tools?

Single-task tools (sourcing agents, search assistants, scheduling bots) wrap one model around one workflow. Druid’s architecture decomposes any business goal into subtasks dispatched to specialist agents like Knowledge, Process, Voice, Workspace AI Agents, with shared context, governance, and observability. This means one platform replaces multiple point solutions.

How are agent responses audited and traced?

Every response is logged with its full provenance chain: input prompt, retrieved knowledge sources, confidence score, model used, latency, and the Conductor orchestration path. This audit trail is indexed, and you can use natural language query through the observability dashboard for compliance review, debugging, and optimization.

What is the Druid Becus LLM and when should it be used?

Becus is Druid’s proprietary language model designed for air-gapped and on-premises deployments where data cannot leave your infrastructure. It runs on the same Conductor orchestration layer as cloud-hosted models, supports the same RAG pipeline, and eliminates third-party model training exposure for regulated environments.

Latest agentic AI updates from Druid AI

Connect what matters. Make work feel effortless.

See how proven AI agents work for you

Inside real systems, in real scenarios, with accuracy, reliability, and control. So your work feels simpler, not harder.